|

|

|

Monday, March 2nd, 2020

Poking through 14+ years of posts I find information that’s as useful now as when it was written.

Golden Oldies is a collection of the most relevant and timeless posts during that time.

In August 2016 I wrote Self-driving Tech Not Ready for Primetime and a month later Tesla was hacked. But, as you’ll find out tomorrow, hacking isn’t the only problem — humans are actually way higher on the problem scale. While it’s not easy, hacking dangers can be minimized, but fixing humans is impossible.

Read other Golden Oldies here.

I’ve been writing (ranting?) about the security dangers of IoT and the connected world in general.

Security seems to be an afterthought— mostly after a public debacle, as Chrysler showed when Jeep was hacked.

GM took nearly five years to fully protect its vehicles from the hacking technique, which the researchers privately disclosed to the auto giant and to the National Highway Traffic Safety Administration in the spring of 2010.

Pity the half million at-risk OnStar owners.

A few days ago Tesla was hacked by Chinese white hat Keen Team.

“With several months of in-depth research on Tesla Cars, we have discovered multiple security vulnerabilities and successfully implemented remote control on Tesla Model S in both Parking and Driving Mode.”

They hacked the firmware and could activate the brakes, unlock the doors and hide the rear view mirrors.

Tesla is the darling of the Silicon Valley tech set and Elon Musk is one of the Valley gods, but it still got hacked. And the excuse of being new to connected tech just doesn’t fly.

And if connected car security is full of holes, imagine the hacking opportunities with self-driving cars.

The possibilities are endless. I can easily see hackers, or bored kids, taking over a couple of cars to play chicken on the freeway at rush hour.

Nice girls don’t say, ‘I told you so’, but I’m not nice, so — I told you so.

Image credit: mariordo59

Posted in Golden Oldies, Innovation | No Comments »

Monday, June 17th, 2019

Poking through 13+ years of posts I find information that’s as useful now as when it was written.

Golden Oldies is a collection of the most relevant and timeless posts during that time.

This post and the quote from the FTC dates back to 2015. Nothing on the government side has changed; the Feds are still investigating and Congress is still talking. And as we saw in last weeks posts the company executives are more arrogant and their actions are much worse. One can only hope that the US government will follow in the footsteps of European countries and rein them in.

Read other Golden Oldies here.

Entrepreneurs are notorious for ignoring security — black hat hackers are a myth — until something bad happens, which, sooner or later, always does.

They go their merry way, tying all manner of things to the internet, even contraceptives and cars, and inventing search engines like Shodan to find them, with nary a thought or worry about hacking.

Concerns are pooh-poohed by the digerati and those voicing them are considered Luddites, anti-progress or worse.

Now Edith Ramirez, chairwoman of the Federal Trade Commission, voiced those concerns at CES, the biggest Internet of Things showcase.

“Any device that is connected to the Internet is at risk of being hijacked,” said Ms. Ramirez, who added that the large number of Internet-connected devices would “increase the number of access points” for hackers.

Interesting when you think about the millions of baby monitors, fitness trackers, glucose monitors, thermostats and dozens of other common items available and the hundreds being dreamed up daily by both startups and enterprise.

She also confronted tech’s (led by Google and Facebook) self-serving attitude towards collecting and keeping huge amounts of personal data that was (supposedly) the basis of future innovation.

“I question the notion that we must put sensitive consumer data at risk on the off chance a company might someday discover a valuable use for the information.”

At least someone in a responsible position has finally voiced these concerns — but whether or not she can do anything against tech’s growing political clout/money/lobbying power remains to be seen.

Image credit: centralasian

Posted in Entrepreneurs, Ethics, Golden Oldies, Innovation | No Comments »

Wednesday, June 12th, 2019

You’d have to be living on another planet not to be aware of the isht pulled by Facebook. Where do I start? You’d have to be living on another planet not to be aware of the isht pulled by Facebook. Where do I start?

With the fact that Facebook is getting fined for storing millions of passwords in plain text or that they “unintentionally” uploaded a million and a half new member email contacts? Or that user data, such as friends, relationships and photos, was used to reward partners and fight rivals? Or might it bother you more to know that your posts, photos, updates, etc., whether public or private, are labeled and categorized by hand by outsourced works in India? Nastier is Facebook sharing/selling your data to cell phone carriers.

Offered to select Facebook partners, the data includes not just technical information about Facebook members’ devices and use of Wi-Fi and cellular networks, but also their past locations, interests, and even their social groups. This data is sourced not just from the company’s main iOS and Android apps, but from Instagram and Messenger as well. The data has been used by Facebook partners to assess their standing against competitors, including customers lost to and won from them, but also for more controversial uses like racially targeted ads.

Facebook owns Instagram, so it should come as no surprise that the private phone numbers and email addresses of millions of celebrities and influencers were scraped by a partner company.

Then there is Google, which dumps location data from millions of devices, not just Android, into a database called Sensorvault and makes it available for search to law enforcement, among others. On May 7 Google claimed it had found privacy religion, but on CNBC reported that Gmail tracks and saves every digital receipt, not just things, but services and, of course Amazon. Enterprise G Suite customers don’t fare much better. Their user passwords were kept un-encrypted on an internal server for years. Not hacked, but still…

YouTube is in constant trouble for the way it interprets its constantly changing Terms Of Service.

The list for all go on and on.

The European Union is far ahead of the US in terms of privacy, anticompetitive actions, etc., but US consumers are finally waking up. So-called Big Tech is no longer popular politically and the Justice Department is opening an antitrust investigation of Google (Europe already fined it nearly 3 billion in 2017 for anticompetitive actions).

Can Facebook be far behind?

A bit more next week.

Image credit: MySign AG

Posted in Culture, Ethics | No Comments »

Monday, June 10th, 2019

Poking through 13+ years of posts I find information that’s as useful now as when it was written.

Golden Oldies is a collection of the most relevant and timeless posts during that time.

For years I’ve written about the lie/cheat/steal attitude of social media sites, such as Facebook, Google, Amazon, the list goes on and on. This post is only a year old, but I thought it could use some updating. What I can tell you today is that nothing has improved, in fact it has gotten much worse — as you’ll see over the next two days.

Read other Golden Oldies here.

you ever been to a post-holiday potluck? As the name implies, it’s held within two days of any holiday that involves food, with a capital F, such as Thanksgiving, Christmas and, of course, Easter. Our group has only three rules, the food must be leftovers, conversation must be interesting and phones must be turned off. They are always great parties, with amazing food, and Monday’s was no exception.

The unexpected happened when a few of them came down on me for a recent post terming Mark Zukerberg a hypocrite. They said that it wasn’t Facebook’s or Google’s fault a few bad actors were abusing the sites and causing problems. They went on to say that the companies were doing their best and that I should cut them some slack.

Rather than arguing my personal opinions I said I would provide some third party info that I couldn’t quote off the top of my head and then whoever was interested could get together and argue the subject over a bottle or two of wine.

I did ask them to think about one item that stuck in my mind.

How quickly would they provide the location and routine of their kids to the world at large and the perverts who inhabit it? That’s exactly what GPS-tagged photos do.

I thought the info would be of interest to other readers, so I’m sharing it here.

Facebook actively facilitates scammers.

The Berlin conference was hosted by an online forum called Stack That Money, but a newcomer could be forgiven for wondering if it was somehow sponsored by Facebook Inc. Saleswomen from the company held court onstage, introducing speakers and moderating panel discussions. After the show, Facebook representatives flew to Ibiza on a plane rented by Stack That Money to party with some of the top affiliates.

Granted anonymity, affiliates were happy to detail their tricks. They told me that Facebook had revolutionized scamming. The company built tools with its trove of user data (…) Affiliates hijacked them. Facebook’s targeting algorithm is so powerful, they said, they don’t need to identify suckers themselves—Facebook does it automatically. And they boasted that Russia’s dezinformatsiya agents were using tactics their community had pioneered.

Scraping Android.

Android owners were displeased to discover that Facebook had been scraping their text-message and phone-call metadata, in some cases for years, an operation hidden in the fine print of a user agreement clause until Ars Technica reported. Facebook was quick to defend the practice as entirely aboveboard—small comfort to those who are beginning to realize that, because Facebook is a free service, they and their data are by necessity the products.

I’m not just picking on Facebook, Amazon and Google are right there with it.

Digital eavesdropping

Amazon and Google, the leading sellers of such devices, say the assistants record and process audio only after users trigger them by pushing a button or uttering a phrase like “Hey, Alexa” or “O.K., Google.” But each company has filed patent applications, many of them still under consideration, that outline an array of possibilities for how devices like these could monitor more of what users say and do. That information could then be used to identify a person’s desires or interests, which could be mined for ads and product recommendations. (…) Facebook, in fact, had planned to unveil its new internet-connected home products at a developer conference in May, according to Bloomberg News, which reported that the company had scuttled that idea partly in response to the recent fallout.

Zukerberg’s ego knows no bounds.

Zuckerberg, positioning himself as the benevolent ruler of a state-like entity, counters that everything is going to be fine—because ultimately he controls Facebook.

There are dozens more, but you can use search as well as I.

What can you do?

Thank Firefox for a simple containerized solution to Facebook’s tracking (stalking) you while surfing.

Facebook is (supposedly) making it easier to manage your privacy settings.

There are additional things you can do.

How to delete Facebook, but save your content.

The bad news is that even if you are willing to spend the effort, you can’t really delete yourself from social media.

All this has caused a rupture in techdom.

I could go on almost forever, but if you’re interested you’ll have no trouble finding more.

Image credit: weisunc

Posted in Communication, Culture, Ethics, Golden Oldies | No Comments »

Wednesday, January 9th, 2019

Nefarious encompasses much of what’s wrong with the prime goal of social media companies — hook users.

I love the word ‘nefarious’; in case you aren’t familiar with it synonyms include, evil, wicked, rotten, treacherous, villainous, and many more.

Hook them and sell them.

Users bear some of the responsibility, but it’s difficult to say no to something that’s not just socially acceptable, but necessary, in spite of it having the addictive power of heroin.

Sure, social media companies need to police their platforms much better, but users need to use their brains when sourcing services.

Assuming information offered by service providers, such as plastic surgeons, on sites like Snapchat and Instagram is truthful, reliable and vetted is just plain stupid.

“I’ve had my before and after photos stolen—used by other doctors as if they’re their own work. I’ve had my own video content—even sometimes with me in it—used by other people,” said Dr. Devgan.

In fact, a 2017 study found that when searching one day’s worth of Instagram posts using popular hashtags—only 18% of top posts were authored by board-certified surgeons, and medical doctors who are not board certified made up another 26%.

Then there are phones — and third party apps.

A friend and I were sitting at a bar, iPhones in pockets, discussing our recent trips in Japan (…) The very next day, we both received pop-up ads on Facebook about cheap return flights to Tokyo.

(…) data you provide is only processed within your own phone. This might not seem a cause for alarm, but any third party applications you have on your phone—like Facebook for example—still have access to this “non-triggered” data. And whether or not they use this data is really up to them.

Google freely admits it reads your Gmail and Android constantly harvests data; all in the name of providing a “more relevant marketing experience.”

Amazon’s Alexa keeps having security problems that are shrugged off as minor ‘oops’, but they aren’t minor when they happen to you.

Google suffers from similar problems, as does every smart product you add to your home.

There’s a lot more, but you can find it faster than I can add it to this post.

The lesson to learn is that privacy and security start with you, because believing that the companies supplying the product/service give a damn flies in the face of the daily increase of evidence to the contrary.

Posted in Culture, Ethics | No Comments »

Wednesday, October 17th, 2018

Are you familiar with the song Where have all the flowers gone?

It was written by Pete Seeger, with additional verses added by others, and the full circle of the song is as valid today as it was when Seeger wrote it nearly 60 years ago.

The refrain at the end of each verse is “Oh, when will they ever learn? Oh, when will they ever learn?” and it became one of the best known protest songs of the Viet Nam War. Fast forward to today you find proof across the globe that we still haven’t learned.

That refrain also applies, with some rewording, to the war being waged between technical advances and consumer safety and security.

In September, Facebook hesitantly admitted that its access keys were hacked due to flawed code — a hack that potentially affected more than 50 million users, including Zukerberg and Sandberg.

Facebook explained that the hack was caused by multiple bugs in its code relating to a video-upload tool and Facebook’s pro-privacy “View As” feature. (…)

Most recently, a major flaw was found in the AI code used in personal assistants, such as Alexa, Siri, or Cortana.

Scientists at the Ruhr-Universitaet in Bochum, Germany, have discovered a way to hide inaudible commands in audio files (…) the flaw is in the very way AI is designed. (…) According to Professor Thorsten Holz from the Horst Görtz Institute for IT Security, their method, called “psychoacoustic hiding,” shows how hackers could manipulate any type of audio wave–from songs and speech to even bird chirping–to include words that only the machine can hear, allowing them to give commands without nearby people noticing. The attack will sound just like a bird’s call to our ears, but a voice assistant would “hear” something very different.

The “damn the security / full speed ahead” mentality isn’t anything new.

Nor is the greed that drives it.

There is an old saying, “Fool me once, shame on you. Fool me twice, shame on me.”

When will they ever learn?”

It’s likely the answer is “never.”

Image credit: koka_sexton

Posted in Business info | 1 Comment »

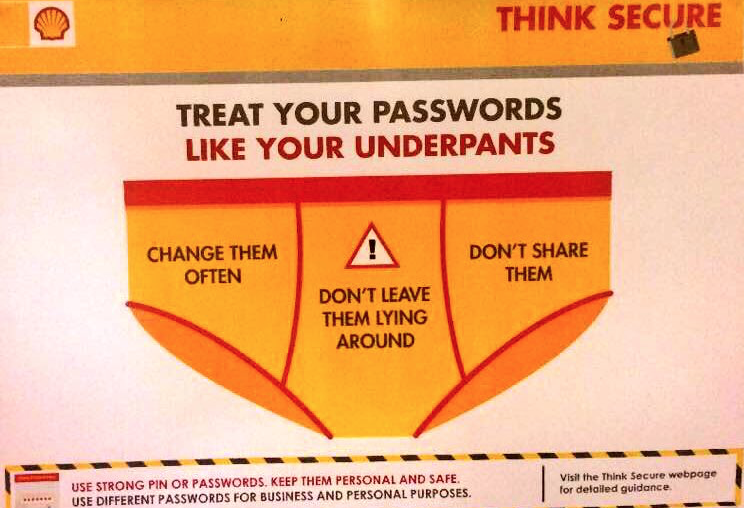

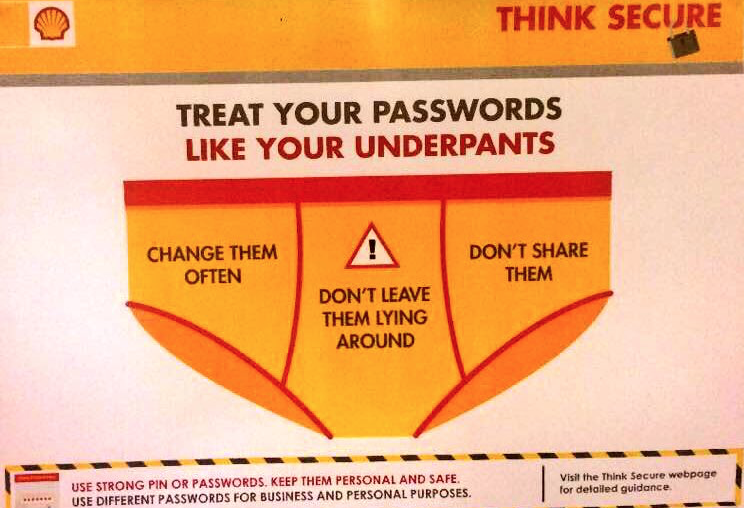

Wednesday, September 5th, 2018

In spite of my dislike of social media I know there is interesting stuff lurking amidst the inane, the garbage and the hate.

Fortunately, much of what I’m missing is referenced in stuff I do read, such as CB Insights, which is where the following showed up.

And here’s a link to my approach to make passwords easy.

Image credit: CB Insights

Posted in Communication, Just For Fun, Personal Growth | No Comments »

Tuesday, June 5th, 2018

There’s an old saying that stuff comes in threes.

A couple of months ago I wrote Privacy Dies as Facebook Lies.

Today I read a new article regarding Facebook’s data-sharing policies with so-called “service providers,” AKA, hardware partners.

Facebook has reached data-sharing partnerships with at least 60 device makers — including Apple, Amazon, BlackBerry, Microsoft and Samsung (…) to expand its reach and let device makers offer customers popular features of the social network, such as messaging, “like” buttons and address books. (…)

Some device partners can retrieve Facebook users’ relationship status, religion, political leaning and upcoming events, among other data. Tests by The Times showed that the partners requested and received data in the same way other third parties did.

Facebook’s view that the device makers are not outsiders lets the partners go even further, The Times found: They can obtain data about a user’s Facebook friends, even those who have denied Facebook permission to share information with any third parties. (…)

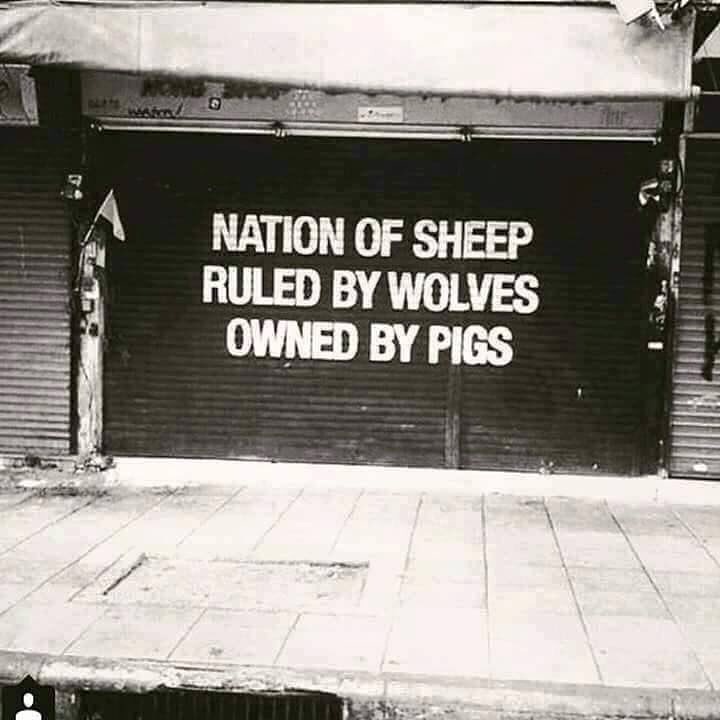

Last Friday KG sent me this image.

Considering the three together made me wonder. Considering the three together made me wonder.

Is Facebook a wolf or a pig?

Or both.

Image credit: Internet meme

Posted in Culture, Ethics, Personal Growth | No Comments »

Friday, January 19th, 2018

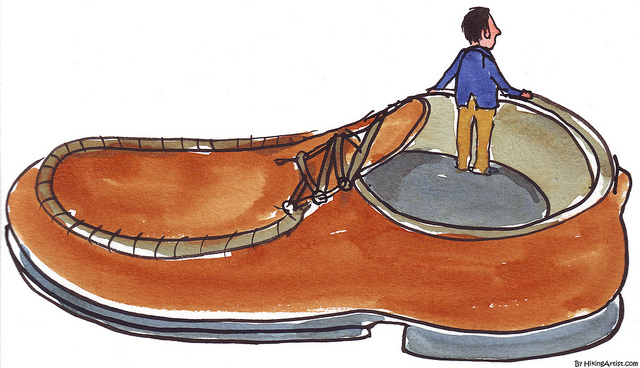

A Friday series exploring Startups and the people who make them go. Read all If the Shoe Fits posts here.

Sometimes predictions are hilarious, especially those about where technology is taking us.

It’s not that they get the tech wrong, but they often don’t factor in the minor details — such as customers.

Media is aglow with stories of how autonomous vehicles (AVs) will literally change the world beyond anything you can imagine.

In a recent survey by AAA, for example, 78% of respondents said they were afraid to ride in an AV. In a poll by insurance giant AIG, 41% didn’t want to share the road with driverless cars. And, ironically, even as companies roll out more capable semi-AVs, the public is becoming less—not more—trusting of AVs, according to surveys over the past 2 years by the Massachusetts Institute of Technology (MIT) in Cambridge and marketing firm J.D. Power and Associates.

And then there’s security.

Every time a software hack is reported, especially from a vulnerability the company knew about two years before it happened, as with Chrysler’s Jeep, or a bank, a retailer, a whatever, people grow more and more aware of just how vulnerable a software-based world that runs on online updates actually is.

Speaking at the National Governors Association meeting last year, Tesla’s Elon Musk, said, “I think one of the biggest concerns for autonomous vehicles is somebody achieving a fleet-wide hack.”

The solution?

Mr Musk insists that a kill switch “that no amount of software can override” would “ensure that you gain control of the vehicle and cut the link to the servers”,

But what does control mean to an inert lump of metal that has no gas pedal, brakes, or steering wheel?

The car would just shut down wherever it was — maybe the middle of the freeway at rush hour or a lonely mountain road during a storm.

So customer trust and security are the main obstacles to the AV/tech-enabled world companies large and small are drooling over.

Given most companies historically cavalier attitude towards security and the general distrust of auto companies in particular, the result of multiple recalls over the years, changing people’s minds won’t be easy.

And for every step forward a major hack will mean at least three steps back.

Image credit: HikingArtist

Posted in If the Shoe Fits, Innovation | No Comments »

Thursday, April 27th, 2017

A few days ago Miki sent me an article about Tanium giving prospective customers a look into their client hospital’s live network, but without permission or protecting the identity of the hospital completely. A few days ago Miki sent me an article about Tanium giving prospective customers a look into their client hospital’s live network, but without permission or protecting the identity of the hospital completely.

I wrote her back today as follows.

I had not seen this on my own, but I have been reading about the company for a few days now.

Coming from the medtech industry and security specifically I will say this.

The fact that he and his company used live hospital data without their consent will be a deathblow to them.

Hospitals take this very seriously because they are the ones who are held responsible by the Office for Civil Rights under Health and Human Services.

The hospital will be shown to have a vulnerability and will be forced to pay fines, lose out on government funds and potentially face sanctions.

As a result the rest of the healthcare industry will treat Tanium like a pariah because they will not want to face repercussions.

Regardless of the industry it’s shocking to see how folks think it’s ok to manipulate or abuse customer relationships for their own profit, it always ends badly.

Miki responded.

Sadly, I think they will find a way to smooth it over. Google, Facebook, etc sell customer data all the time. It’s how so many make their money and no one seems to care.

I know HIPPA is supposed to prevent this stuff, but I’m sure companies are getting around that, too, they just haven’t been caught, yet.

That’s the key, not being caught.

Every company that is caught, or just challenged, cries that they take their customer’s privacy seriously or that that’s not what their culture stands for, etc.

But only when they are caught.

I sincerely hope you are correct and that Tanium takes a major blow and, more importantly, that the CEO is forced out, but I’m not holding my breath. I guess I’ve finally gotten pretty cynical about this stuff.

So now I’m trying to decide if Miki’s cynicism is warranted or if I’m right and the publicized results of Tanium’s actions will have the effect they should.

I’ll keep you informed as there are more developments.

Image credit: Wikipedia

Posted in Culture, Ethics, Ryan's Journal | No Comments »

|

Subscribe to

Subscribe to

MAPping Company Success

About Miki

Clarify your exec summary, website, etc.

Have a quick question or just want to chat? Feel free to write or call me at 360.335.8054

The 12 Ingredients of a Fillable Req

CheatSheet for InterviewERS

CheatSheet for InterviewEEs™

Give your mind a rest. Here are 4 quick ways to get rid of kinks, break a logjam or juice your creativity!

Creative mousing

Bubblewrap!

Animal innovation

Brain teaser

The latest disaster is here at home; donate to the East Coast recovery efforts now!

Text REDCROSS to 90999 to make a $10 donation or call 00.733.2767. $10 really really does make a difference and you'll never miss it.

And always donate what you can whenever you can

The following accept cash and in-kind donations: Doctors Without Borders, UNICEF, Red Cross, World Food Program, Save the Children

*/

?>About Miki

About KG

Clarify your exec summary, website, marketing collateral, etc.

Have a question or just want to chat @ no cost? Feel free to write

Download useful assistance now.

Entrepreneurs face difficulties that are hard for most people to imagine, let alone understand. You can find anonymous help and connections that do understand at 7 cups of tea.

Crises never end.

$10 really does make a difference and you’ll never miss it,

while $10 a month has exponential power.

Always donate what you can whenever you can.

The following accept cash and in-kind donations:

|

Considering the three together made me wonder.

Considering the three together made me wonder.