When What You See Ain’t What You Get

by Miki SaxonOur apologies for missed and late postings. We were still having technical issues, but they’ve all been handled. (I hope!)

On one hand, some of the things it can do, such as enable an iPhone to do an ultrasound scan, are amazing and encouraging.

Earlier this year, vascular surgeon John Martin was testing a pocket-sized ultrasound device developed by Butterfly Network, (…) he knew that the dark, three-centimeter mass he saw did not belong there. “I was enough of a doctor to know I was in trouble,” he says. It was squamous-cell cancer.

On the other hand, AI is full of human bias, whether intentional or not.

AI tends to be fairly unflattering to anyone with a darker skin tone, and that using AI to judge female beauty is a pretty questionable goal. (…) MakeApp’s unflattering, malfunctioning AI is the latest in a long line of AI controversies: Snapchat’s offensive Bob Marley filter, FaceApp’s “black” filters, and smartphone cameras that lighten your skin by default.

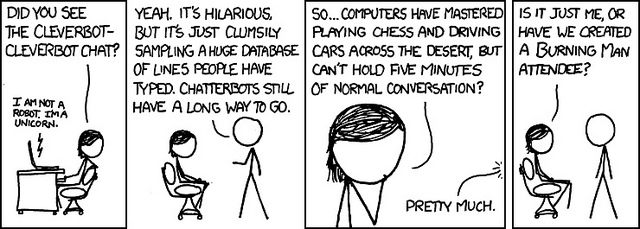

There was a time when the phrase “seeing is believing” was tightly connected to reality.

That connection became shaky with the advent of Photoshop and has been rapidly deteriorating ever since.

Enter AI in the form of the generative adversarial networks (GAN).

But images and sound recordings retain for many an inherent trustworthiness. GANs are part of a technological wave that threatens this credibility.

GANs are poised to escalate fake news, discredit evidence, legal, medical, etc., and force you to question everything — or believe blindly.

Image credit: brett jordan

November 16th, 2017 at 3:06 am

[…] AI is a big player in enabling sites to addict us to increase their revenue. More and more, AI tells us what to buy (think Amazon suggestions) and, taking a page from game makers, helps online businesses and social media increase the addictiveness of their sites through profiling and data analysis. And Miki also wrote about the dark side in When What You See Ain’t What You Get. […]